PrecepTron¶

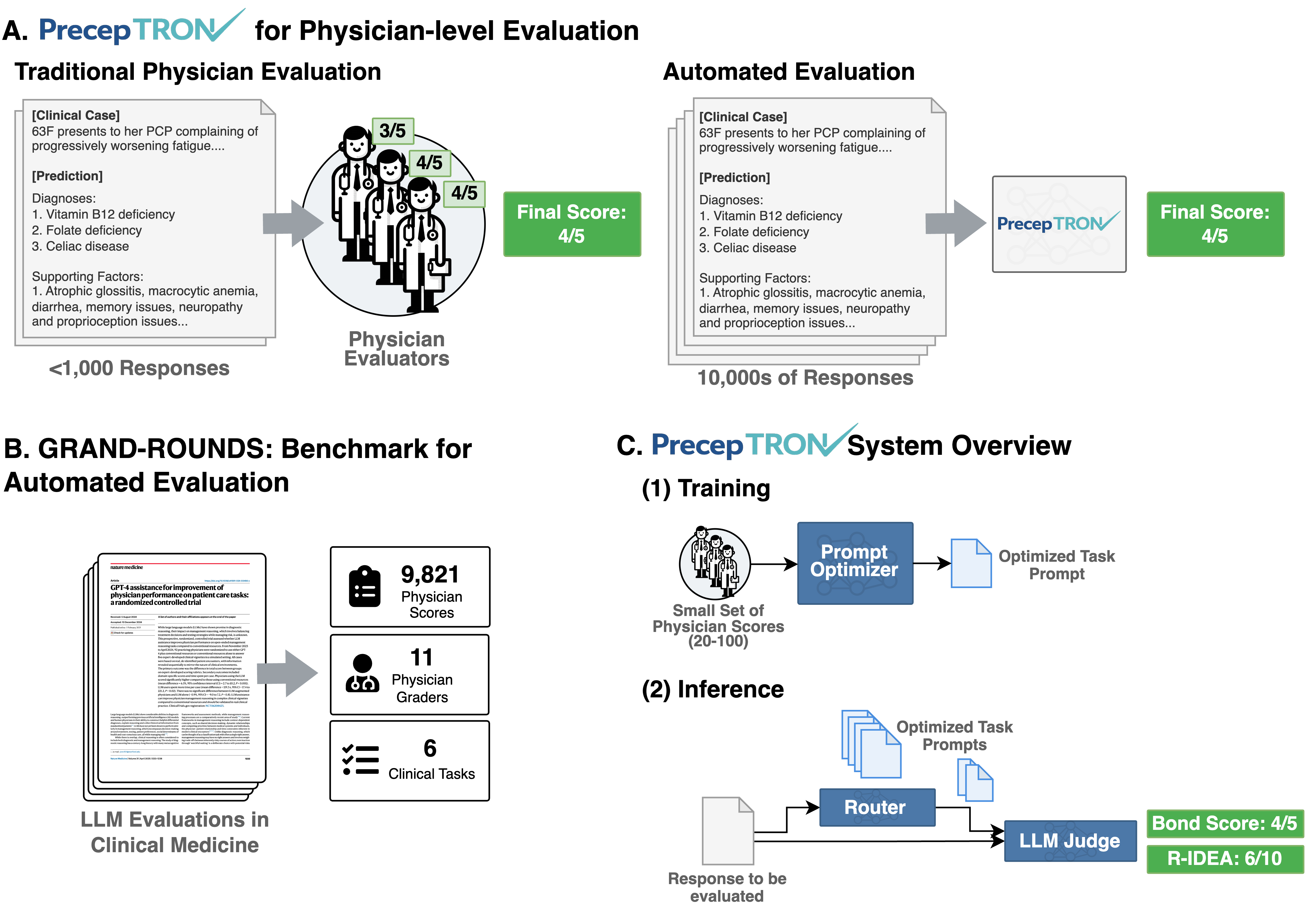

Can you trust an LLM to grade clinical reasoning like a physician?

PrecepTron is an open benchmark for answering that question. We release thousands of real physician-scored responses from published clinical studies — spanning differential diagnosis, management planning, and consultation quality — so you can measure whether your LLM judge actually agrees with expert clinicians.

5,250

Scored responses

9,821

Physician scores

121

Physicians in baseline

11

Physician evaluators

6

Clinical tasks

7

Source studies

What is PrecepTron?¶

LLMs are increasingly used as automated graders for medical AI research — but how do you know the grader itself is reliable? PrecepTron gives you a way to find out.

We collected physician scores from studies at Beth Israel Deaconess Medical Center (BIDMC) and other institutions, covering tasks like differential diagnosis, clinical management, and consultation quality. With this ground truth, you can:

- Validate any LLM judge against real physician scores across multiple clinical tasks.

- Benchmark new models against physician baselines from prior studies, without needing new human evaluation.

- Score clinical responses with a single Python function call using standardized, published rubrics.

- Use optimized judge prompts that we release alongside the benchmark, tuned via GEPA to maximize agreement with physician scores.

For detailed breakdowns of the dataset by task, study, and model, see the Dataset page.

Get Started¶

Install PrecepTron and score a clinical response in under a minute:

from openai import OpenAI

from preceptron import score

client = OpenAI()

# No `task=` — a router LLM picks the best-matching rubric(s) from the

# context you provide. Here we give a differential plus the known final

# diagnosis, and the router routes the response to cpc_bond (and, if a

# case vignette were also provided, to diagnostic_reasoning too).

result = score(

response="1. Pheochromocytoma\n2. Thyroid storm\n3. Carcinoid syndrome",

final_diagnosis="Pheochromocytoma",

model="gpt-4o",

client=client,

)

print(result["router"]["tasks"]) # ['cpc_bond']

print(result["score"]) # 5

print(result["justification"]) # LLM's reasoning

Works with OpenAI, Anthropic, Azure, OpenRouter, or any OpenAI-compatible API.

Vignettes¶

Step-by-step guides for common workflows:

- Validating Your LLM Judge -- Run the full benchmark and measure agreement with physicians.

- Using an LLM Judge for a Task -- Score individual clinical responses with the

score()API. - Benchmarking a New Model Against a Physician Baseline -- Run a new LLM on published cases and compare to the physician baseline using the autograder.

- Generating Truncation Curves -- Trace how diagnostic accuracy evolves as more of a clinical case is revealed.

Source Studies¶

PrecepTron aggregates physician-scored data from the following published studies:

| Study | Title | Tasks | Paper |

|---|---|---|---|

| Goh et al. 2025 | GPT-4 assistance for improvement of physician performance on patient care tasks: a randomized controlled trial | Management reasoning | Nature Medicine |

| Goh et al. 2024 | Large language model influence on diagnostic reasoning: a randomized clinical trial | Diagnostic reasoning | JAMA Network Open |

| Brodeur et al. 2025 | Superhuman performance of a large language model on the reasoning tasks of a physician | Diagnostic reasoning, management reasoning, CPC Bond, BI triage | arXiv |

| Buckley et al. 2024 | Multimodal foundation models exploit text to make medical image predictions | CPC Bond, CPC management | arXiv |

| Buckley et al. 2025 | Comparison of frontier open-source and proprietary large language models for complex diagnoses | CPC Bond | JAMA Health Forum |

| Cabral et al. 2024 | Clinical reasoning of a generative artificial intelligence model compared with physicians | R-IDEA | JAMA Internal Medicine |

| Kanjee et al. 2023 | Accuracy of a generative artificial intelligence model in a complex diagnostic challenge | CPC Bond | JAMA |